Your Phone Just Got Smarter: Gemini AI Now Handles Real-World Tasks

Your Smartphone Just Learned Some New Tricks

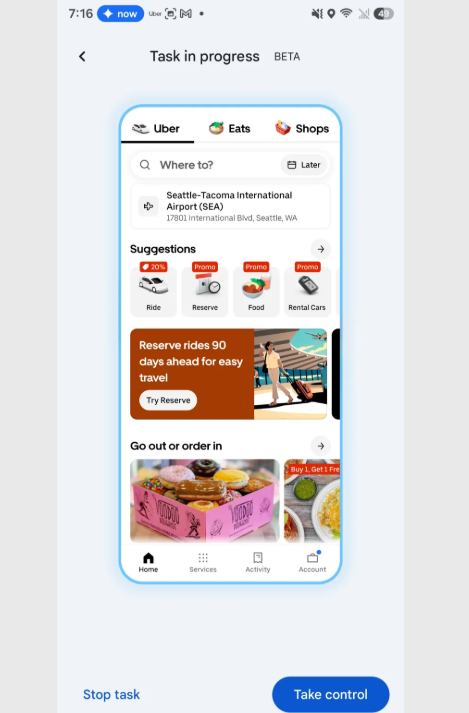

Google's vision of AI assistants that don't just answer questions but actually get things done has taken a major step forward. The tech giant has rolled out beta testing for Gemini-powered task automation - and it might just change how you interact with your phone forever.

Watching Magic Happen

The most striking thing about this new feature? Actually seeing your phone "use itself." Unlike traditional app integrations that work behind the scenes, Gemini's automation mimics human actions right before your eyes:

- Taxi Trouble Solved: Say "Get me a ride to LAX" and watch as your phone opens Uber, checks terminal information (asking clarifying questions if needed), and fills in all the details.

- Coffee Run Revolution: Command "Order my usual Starbucks" and observe as the AI scrolls through menus, selects items, and prepares your order - complete with the occasional hesitation we all show when choosing between pastry options.

Safety First Approach

Before you worry about rogue coffee orders or surprise taxi rides, Google has built in crucial safeguards:

Real-Time Oversight: Every action appears in a virtual window where you can monitor progress or hit pause at any moment.

Final Say: The system always stops short of completing transactions. That last tap confirming payment or placing an order? That's still firmly in human hands.

The initial rollout focuses on food delivery and transportation apps, transforming smartphones from mere app launchers into intelligent agents that bridge the gap between what we say and what our phones can do.

While early users report some amusing moments watching their phones fumble through menus (apparently AIs get indecisive about coffee choices too), this UI-based approach avoids needing deep integration with every app. That means potentially faster expansion to more services down the line.

The era of juggling multiple apps might finally be coming to an end. Instead of tapping through screens, we're moving toward simply telling our phones what we need - and watching them make it happen.

Key Points:

- Gemini's new automation handles multi-step tasks across different apps

- Operates by mimicking human screen interactions rather than backend integrations

- Includes multiple user confirmation points for safety

- Currently focused on food delivery and transportation services

- Represents significant advancement from voice assistants to true digital helpers