Tongyi Lab Unveils Next-Gen Voice Models That Respond Like Humans

Tongyi Lab's Voice AI Breakthrough: Speaking Human

In a significant advancement for voice technology, Tongyi Lab has launched Fun-CosyVoice3.5 and Fun-AudioGen-VD, two models that understand instructions as naturally as humans do. Gone are the days of memorizing specific commands - now you can simply tell these systems what you need.

The Human Touch in Machine Speech

The real magic lies in how these models interpret requests. Want a villainous voice whispering threats? Or perhaps a cheerful barista taking your coffee order? Just say so. The system handles the rest, eliminating the technical jargon barrier that once separated creators from powerful voice tools.

Fun-CosyVoice3.5 brings impressive upgrades:

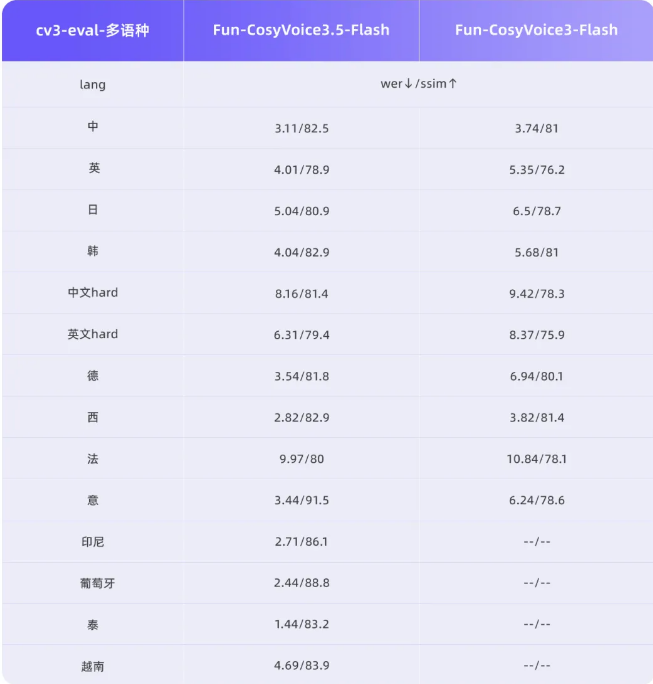

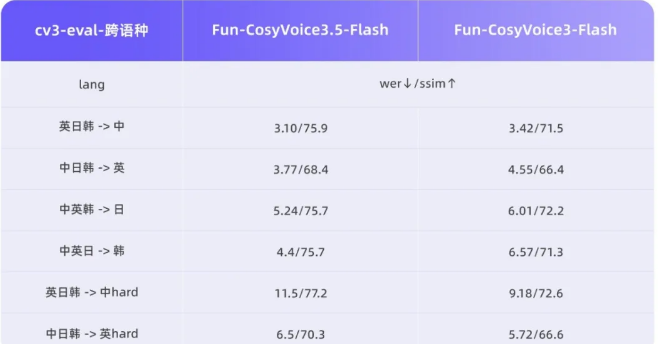

- Supports four additional languages including Thai and Indonesian

- Cuts pronunciation errors by nearly 70%

- Reduces processing delays significantly

The secret sauce combines advanced reinforcement learning techniques called DiffRO and GRPO, which help the AI grasp subtle speech patterns most systems miss.

Meanwhile, Fun-AudioGen-VD transforms sound design:

- Adjusts gender, emotion and even room acoustics on command

- Creates everything from single voices to complex ambient scenes

- Perfect for gaming environments or film dubbing workflows

Why This Matters Beyond Tech Circles

The implications stretch far beyond impressive demos. Film studios can prototype character voices instantly. Game developers might slash weeks off production schedules. Even virtual assistants could soon respond with emotional intelligence rather than robotic precision.

The technology arrives as demand grows exponentially - industry analysts project the voice synthesis market will double by 2028 as consumers embrace more natural digital interactions.

Key Points:

- Natural commands replace technical parameters

- 70% accuracy boost for uncommon words/phrases

- 35% faster response times than previous versions

- New language support expands global accessibility

- Emotional range control unlocks creative potential