Tongyi Lab's New AI Tool Brings Hollywood-Quality Dubbing to Everyone

Revolutionizing Voice Acting with AI

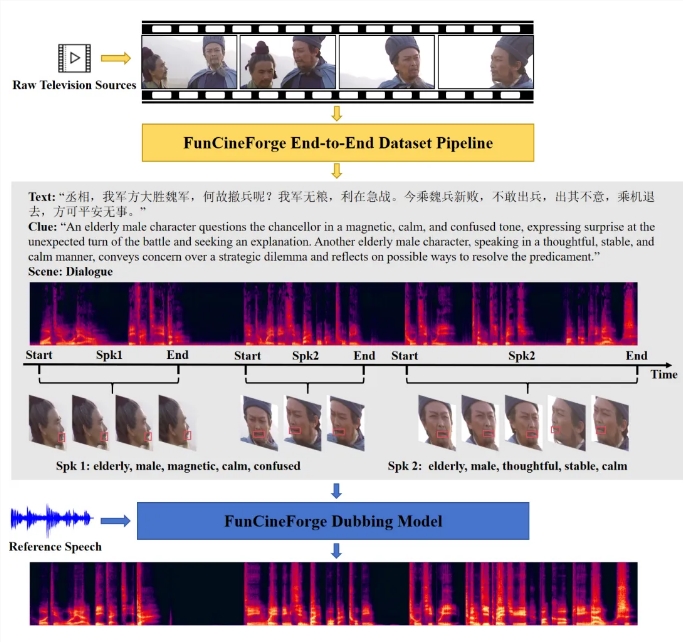

Imagine watching your favorite foreign film where every actor's voice perfectly matches their facial expressions - the subtle quiver of emotion, the precise timing of each word. This cinematic dream is now within reach thanks to Tongyi Lab's newly open-sourced Fun-CineForge, the first AI model capable of handling complex multi-character dialogue with Hollywood-level precision.

Solving the Lip-Sync Dilemma

Traditional AI dubbing often falls flat when faced with film-quality demands. The results can feel disconnected - voices that don't match mouth movements or lack emotional depth. Fun-CineForge tackles these issues head-on with four key innovations:

- Lip Sync Magic: The AI analyzes facial movements frame-by-frame to create perfectly synchronized speech

- Emotional Intelligence: By combining facial analysis with text context, it captures nuanced human emotions

- Voice Consistency: Characters maintain distinct vocal identities even in rapid-fire conversations

- Precision Timing: Voices appear exactly when they should, even if the speaker momentarily leaves the frame

Behind the Scenes: How It Works

The breakthrough comes from two technical advancements that set Fun-CineForge apart:

The CineDub Dataset

- An exceptionally clean training set where transcription errors fall below 2%, thanks to an innovative error-correction system. This means more accurate learning from real-world dialogue examples.

Four-Modality Architecture

- Going beyond standard audio-text models, it incorporates visual cues (lip movements and expressions), text context (emotional tone), audio references (voice samples), and crucially - timing data. This 'time modality' allows for millisecond-perfect synchronization.

Real-World Performance That Impresses

Early benchmarks show Fun-CineForge outperforming existing solutions like DeepDubber-V1 across all critical metrics:

- 30% improvement in word recognition accuracy

- 40% better lip-sync scores

- Near-perfect voice consistency in multi-speaker tests

The model particularly shines in handling duets and group conversations - scenarios where previous AI tools struggled noticeably.

Access for All Creators

In keeping with Tongyi Lab's commitment to open innovation, Fun-CineForge is available through multiple platforms:

- GitHub for developers who want to dive into the code

- HuggingFace for easy model access

- ModelScope for Chinese developers

This release could democratize high-quality dubbing, making professional-grade voice work accessible to indie filmmakers, educators, and content creators worldwide.