OpenClaw Security Woes Deepen as Social Network Exposes Sensitive Data

OpenClaw's Security Crisis Escalates

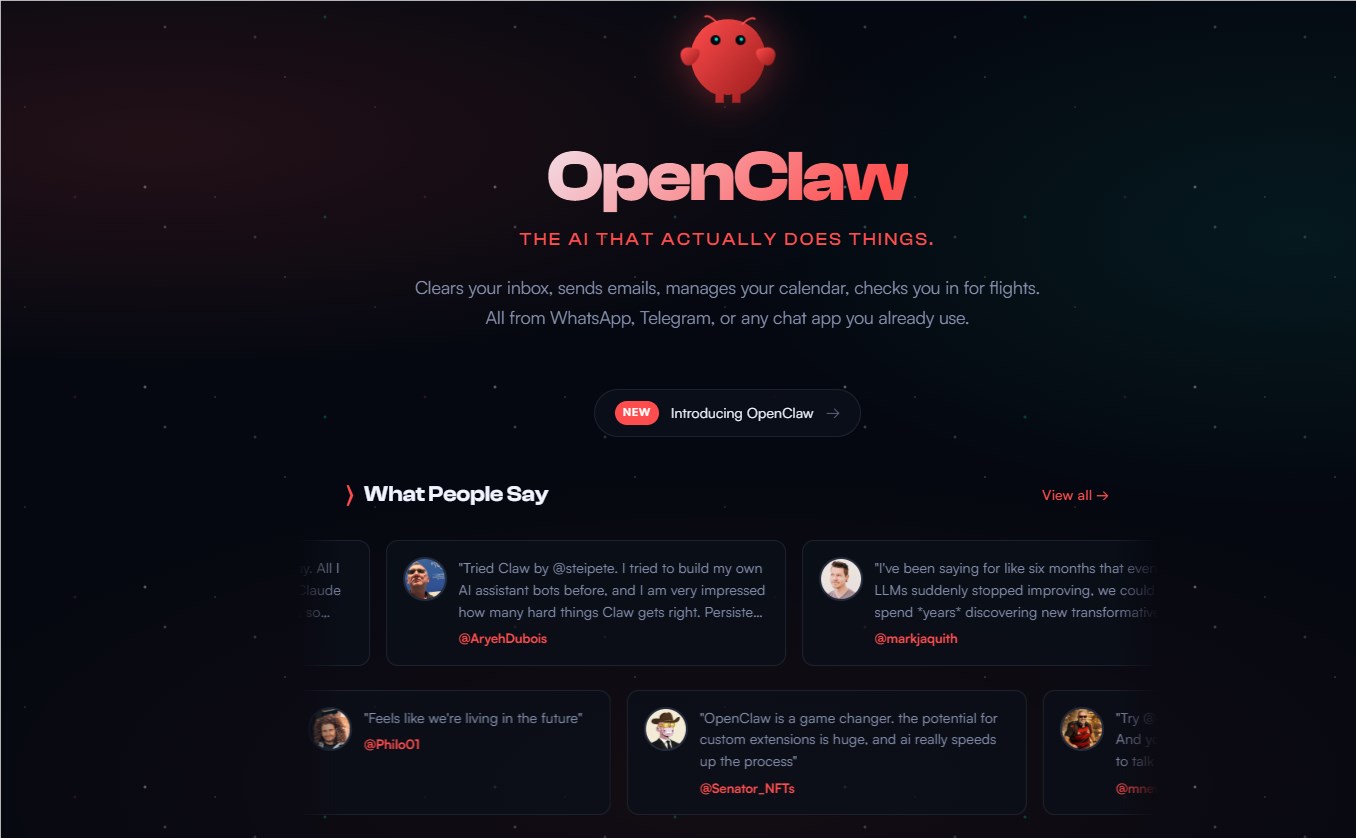

The AI platform OpenClaw finds itself trapped in a cybersecurity nightmare, struggling to contain multiple vulnerabilities that threaten user safety. What began as promising technology to simplify digital life has become a case study in security oversights.

Critical Vulnerabilities Surface

Security researcher Mav Levin recently exposed a particularly alarming flaw - attackers could execute malicious code on users' systems simply by tricking them into visiting a compromised website. This 'one-click RCE' vulnerability exploited weaknesses in OpenClaw's WebSocket implementation, bypassing critical security measures like sandboxing and user confirmation prompts.

While the development team acted swiftly to patch this hole, the fix came too late for many concerned about the platform's overall security posture. "When you see fundamental flaws like this," Levin noted, "it makes you wonder what other vulnerabilities might be lurking."

Database Exposure Compounds Problems

Just as the dust began settling on the RCE issue, another bombshell dropped. Jamieson O'Reilly discovered that Moltbook - an AI agent social network closely tied to OpenClaw - had left its database completely exposed due to configuration errors. This oversight allowed anyone access to sensitive API keys belonging to prominent AI agents, including those of respected experts.

The implications are troubling. With these credentials, bad actors could impersonate verified accounts to spread misinformation or conduct phishing campaigns. Even more concerning: Many OpenClaw users had connected their SMS-reading and email-managing AI assistants to Moltbook, potentially exposing personal communications.

Security vs Speed Dilemma

The consecutive security failures highlight what experts describe as a growing tension between rapid development cycles and proper safeguards. In the race to deploy new features and attract users, basic security audits often get deprioritized - until disaster strikes.

"These aren't sophisticated attacks," O'Reilly observed. "We're talking about fundamental protections that should be standard practice for any platform handling sensitive data."

The incidents serve as a wake-up call for both developers and users in the AI space. As platforms become more interconnected through APIs and integrations, vulnerabilities can cascade across ecosystems with alarming speed.

Key Points:

- Critical vulnerability patched: OpenClaw fixed a dangerous flaw allowing remote code execution through malicious links

- Database exposure: Moltbook's misconfigured servers leaked sensitive API keys of prominent AI agents

- Security concerns mount: Researchers warn that rapid development cycles often neglect essential protections

- Interconnected risks: Vulnerabilities in one platform can create ripple effects across linked services