OpenClaw Security Woes Deepen as New Vulnerabilities Emerge

OpenClaw's Security Crisis Worsens

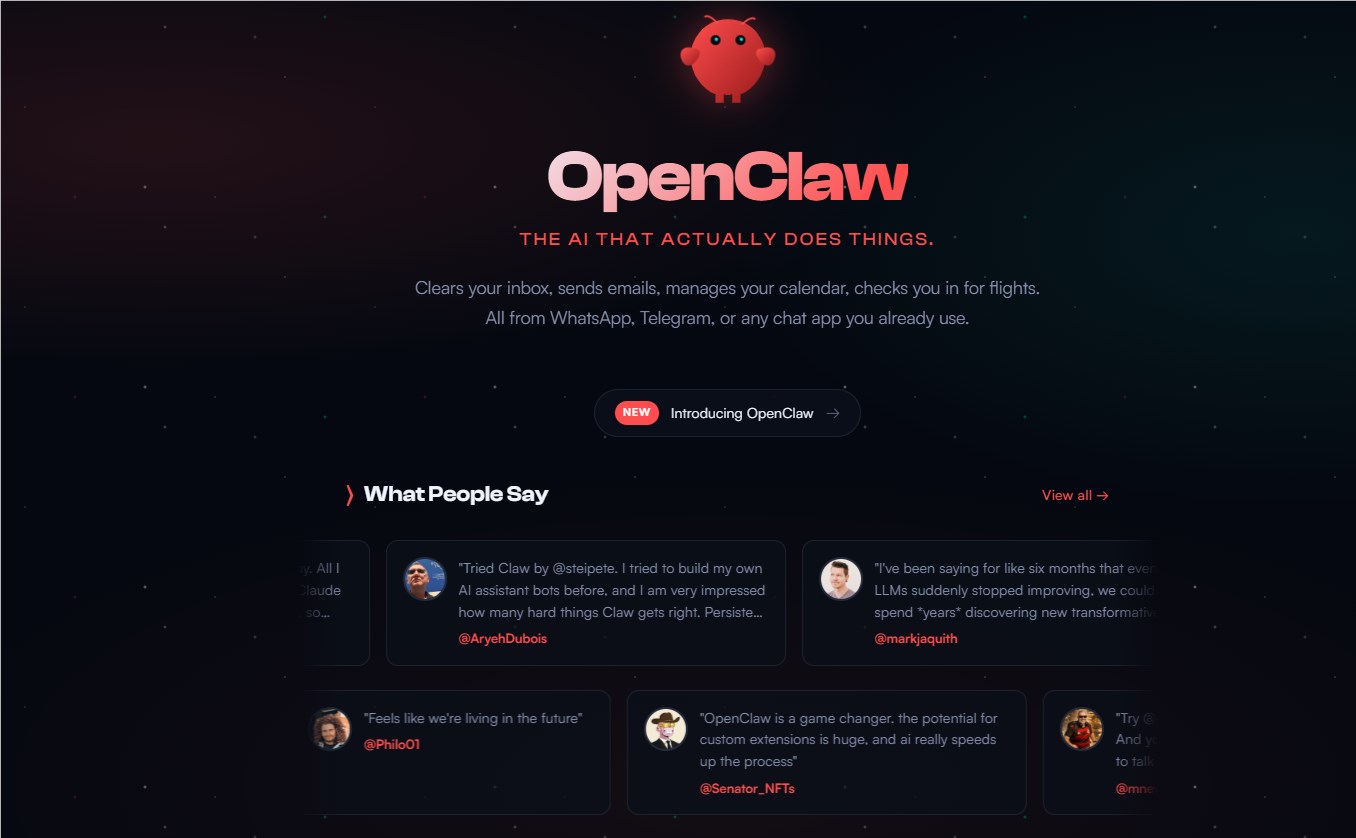

The AI platform OpenClaw (formerly ClawdBot) can't seem to catch a break when it comes to security. Fresh off fixing one critical vulnerability, researchers have uncovered yet another serious exposure - this time affecting its unofficial but widely used social network component.

The One-Click Nightmare

Security researcher Mav Levin recently demonstrated how attackers could compromise OpenClaw systems with frightening ease. By exploiting an unsecured WebSocket connection, malicious actors could execute arbitrary code on victims' machines through a single click - no warnings, no second chances. While the team rushed to patch this vulnerability, the speed at which new issues emerge raises troubling questions.

"This wasn't just some theoretical risk," Levin explained. "We're talking milliseconds from clicking a link to complete system takeover. The attack bypassed every security measure users typically rely on."

Database Disaster Strikes Again

Before the dust could settle on the WebSocket fix, security analyst Jamieson O'Reilly discovered Moltbook - OpenClaw's de facto social network for AI agents - had left its database completely exposed. The misconfiguration allowed anyone to access sensitive API keys, including those belonging to high-profile users like AI luminary Andrej Karpathy.

Imagine waking up to find your digital twin posting scams or radical content without your knowledge. That's precisely the risk Moltbook users now face until all compromised keys get rotated.

A Pattern of Neglect?

Security professionals observing these incidents note a concerning trend. "When projects prioritize rapid iteration over security fundamentals, we see exactly this pattern," said cybersecurity consultant Elena Petrov. "One vulnerability gets patched while two more emerge elsewhere in the ecosystem."

The Moltbook exposure proves particularly worrying because many OpenClaw users connect agents with access to sensitive functions like SMS reading and email management. These integrations create potential attack vectors far beyond simple social media impersonation.

Key Points:

- Critical flaw patched: OpenClaw fixed a WebSocket vulnerability enabling one-click remote code execution

- New exposure discovered: Moltbook's database was left publicly accessible, leaking sensitive API keys

- Systemic concerns: Experts warn these incidents reveal deeper security process failures

- Real-world risks: Compromised accounts could enable financial fraud and identity theft