OpenAI's GPT-5.4 Leak Reveals Game-Changing Memory Capabilities

OpenAI's Next Leap: GPT-5.4 Details Surface in Accidental Leak

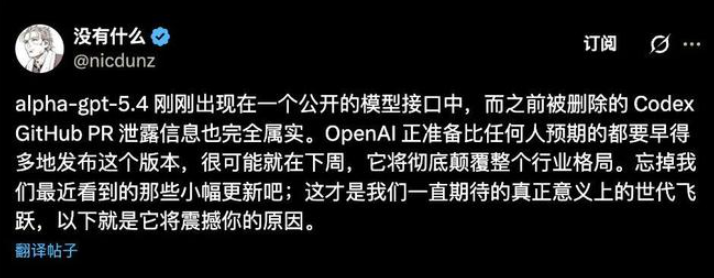

The AI development community buzzed with excitement this week when an OpenAI engineer accidentally included unreleased GPT-5.4 code in a public repository update. Though quickly removed, the slip gave us tantalizing glimpses of what might be coming next in large language model technology.

Memory That Lasts

The most striking revelation? GPT-5.4 appears poised to solve one of current AI's biggest limitations - its goldfish-like memory. The leaked specs suggest:

- Massive context windows: Up to 2 million tokens, dwarfing current models' capabilities

- True persistence: Unlike today's session-based chats, GPT-5.4 could maintain workflow states between interactions

Imagine an AI that remembers your project details and preferences like a human colleague would - that's the promise of this "stateful AI" approach.

Seeing Clearly

The leaks also hint at major visual processing upgrades:

Pixel-perfect analysis: Instead of working with compressed images, the model would access original image bytes directly. For designers and engineers, this could mean:

- Accurate interpretation of complex diagrams

- No more distorted UI mockup analyses

- True visual understanding at the pixel level

Strategic Moves in the AI Race

Why skip straight to 5.4? Industry watchers see this as OpenAI's counterpunch against competitors like Anthropic's Claude and Google's Gemini. The focus appears to be shifting from benchmark scores to practical utility:

Agent-first design: Reliability for autonomous operation seems prioritized over raw performance metrics.

Hardware hurdles: Supporting these memory features will push current computing infrastructure to its limits, particularly regarding high-bandwidth memory requirements.

The accidental reveal - though quickly "corrected" by OpenAI - gives us fascinating insight into where conversational AI might be heading next.

Key Points:

- Memory breakthrough: Potential for 2M token context and cross-session state retention

- Visual upgrades: Native high-resolution image processing capabilities

- Strategic timing: Likely response to competitive pressure in the AI space

- Implementation challenges: Will require significant hardware advancements